It’s been a while since my last post. Currently, I’m diving into Docker. Docker is like Vagrant but for Linux Containers(LXC). Both tools are amazing. If you think LXC could be interesting for you, then you will also love Docker.

So what is this Docker?

Docker is an open-source engine that automates the deployment of any application as a lightweight, portable, self-sufficient container that will run virtually anywhere.

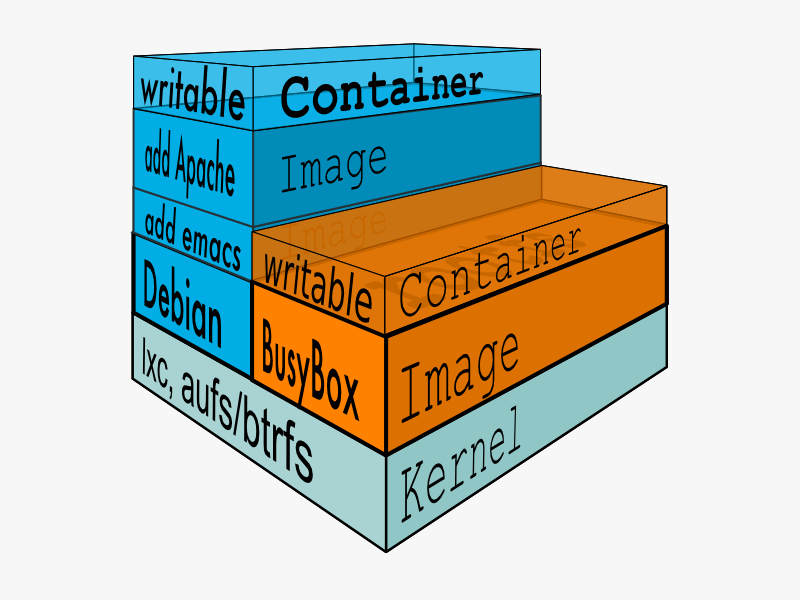

This definition says indeed a lot. But I recommend you to get familiar with Docker by completing Docker’s Interactive command line tutorial. And if you are finished and ask yourself how this all works, look at the pictured glossary to understand what “Kernel”, “Image”, “Container” etc. means and how it works together.

If still interested it is time to install docker and get hands on it. After installation Docker should be on the path of your system. Sometimes in the current version it is possible to work as non root. If you get strange errors, but wish to work as non root try this:

sudo chgrp $(groups |cut -d' ' -f1) /var/run/docker.sock

Service

I’m newbie to Docker and first thing a had to learn is that developers of Docker think of containers as of (isolated) services. This is logical, because of nature of kernel level isolation. The memory state of container is not preserved when container is stopped but the file-system changes are. So the state is the point and we need to careful with it. Web Server could be build easy as ephemeral service, but not a database, they need some persistent storage. That is where volumes comes into play.

Docker volumes

Data volumes are designated directories that bypasses the Docker unified file system and go directly under /var/lib/docker/vfs/[volume Id]. (The volume id could be found by docker inspect container_id )

Therefore they always mapped to host file system under the hood, which reduces the need of mapping volumes to explicit host file system location in my opinion. But currently data-volumes are always tied to containers (even if they don’t need to run) and this lead people to use “data-only” containers.

Dockerfile

And of course there is a definition of a Container which is a simple format file named Dockerfile. This format is easy to understand and to learn. I’m still on optimizing my strategy on how my Dockerfiles should look like. Should it be one purpose Dockerfile for every service or some kind of Dockerfile tree? Does it really matters for docker or can it really cache images on unique RUN command base (I’m currently unsure). So I’m about to gather some experience here. However, I have my two first trusted images on the Docker Index. They also available on Github.

Useful docker commands

#Removes all docker containers, not images.

docker rm $(docker ps -a -q)

# Kill all containers and remove them

docker rm $(docker kill $(docker ps -aq))

#Removes all docker images

docker rmi $(docker images -q)

#Remove all dangling images

docker rmi $(docker images -qf "dangling=true")

#Selects id of all images except "debian" and "myimage"

docker images | grep -v 'debian\|my-image' | awk {'print $3'}

#Shows IP address of a container

docker inspect container_name | grep IPAddress

TODOs

I will proceed to use docker and hope to have time to blog about it. I need more experience whit that. My use cases are mostly in field of enterprise java, but not only. If there is more time I will need a lot of docker instances on my laptop. Docker will help to organize and separate things and prevent me to doing same installation twice in a lightweight way.

And I’m very interested in your experiences with Docker to.